Your Brain on ChatGPT: A reality check on “Brain Rot”

I asked an LLM to write this script about whether ChatGPT is bad for your brain. The irony isn't lost on me.

But here's what actually concerns me: I can't remember the last time I sat down to write something difficult from scratch. I really struggled with it, felt that productive frustration of searching for the right words, that mental friction of organizing complex thoughts into coherent paragraphs.

Now? I outline, paste into ChatGPT, edit the output. It's faster. It's cleaner. And if I'm being honest, my brain barely has to work.

That realization hit me last week when I tried to recall the key points from a meeting I'd "written" two days earlier. I drew a complete blank. Not because I'm losing my memory, but because I never really encoded those ideas in the first place. My brain was a passenger, not the driver.

So when headlines started screaming about "ChatGPT brain rot," I didn't dismiss them. But I also didn't panic. Because the reality is more nuanced than either extreme, and more interesting than the viral panic suggests.

The Viral Concern: What People Are Actually Worried About

If you've spent any time on social media lately, you've seen the discourse: ChatGPT is making us stupid. AI is rotting our brains. We're outsourcing our thinking and losing our ability to think.

Let's be clear: "brain rot" isn't a medical diagnosis. It's a provocative language designed to get clicks. But underneath the sensationalism, there's a legitimate intuition that resonates with a lot of us, especially those of us using AI tools daily.

The concern boils down to this: When we offload cognitive work to AI, are we weakening the mental muscles we'd otherwise be exercising?

It's not a crazy question. We've seen this pattern before. GPS changed how we navigate; it seems we are so dependent on GPS nowadays that I would not be able to get anywhere outside the city without the help of google maps. Spell-check changed how we write, many of us have become worse spellers because we know the red squiggle will catch our mistakes. Calculators changed math education and there's ongoing debate about whether students who reach for calculators too early never develop number sense.

So when architecture professionals like you (and me) are using ChatGPT 10-15 times per week to draft meeting notes, write emails, ideate design ideas, and research building codes... yeah, the question matters. We're not just occasionally using a tool. We're integrating it into the core cognitive work of our profession.

The viral anxiety taps into something real: the sense that our brains are working differently, and we're not sure if that's good or bad yet.

The takeaway: The "brain rot" narrative is hyperbolic, but the underlying concern that overreliance on AI might affect our cognitive capabilities deserves serious consideration.

The Neuroscience Reality: What Actually Happens When We Offload Thinking

Here's what we need to understand: your brain isn't a static organ. It's adaptive, plastic, constantly rewiring based on what you practice. This is both the good news and the cautionary tale.

Cognitive Offloading 101: Not All Offloading Is Bad

Cognitive offloading using external tools to reduce mental effort is something humans have done forever. Writing is cognitive offloading (you don't have to memorize everything). Grocery lists are cognitive offloading. Bookmarks are cognitive offloading. This is normal and often beneficial because it frees up mental resources for higher-order thinking.

The question isn't whether we should offload. It's what we offload and when.

When you use a calculator to compute 3,847 × 162 so you can focus on whether the structural load calculation makes sense, that's strategic offloading. You're using the tool to bypass tedious execution so you can focus on conceptual thinking.

When you use ChatGPT to generate an entire design rationale without first thinking through your actual design reasoning, that's something different. You're not just offloading execution, you're offloading the thinking itself.

The Generation Effect: Why Creating > Consuming for Memory

Here's a fundamental principle from cognitive psychology: we remember information dramatically better when we generate it ourselves rather than passively receive it.

This is called the generation effect, and it's been replicated across hundreds of studies. Students who take handwritten notes (forcing them to paraphrase and synthesize) outperform students who type verbatim. People who explain concepts in their own words retain those concepts far better than people who read explanations.

Why? Because generation requires your brain to actively encode information. You have to retrieve relevant knowledge, make connections, find the right words, organize the logic. That cognitive effort creates stronger neural pathways and better memory consolidation.

When you ask ChatGPT to write your project description and copy-paste it into your proposal, you're skipping the generation step entirely. Your brain never had to wrestle with articulating why this design matters. It never encoded that information deeply because it never had to work for it.

This isn't "brain damage." It's just how memory works. No generation = weak encoding = poor retention.

Desirable Difficulty: When Struggle = Learning

Neuroscience research on learning reveals something counterintuitive: making things easy doesn't optimize learning. Making things appropriately difficult does.

This concept of desirable difficulty explains why testing yourself is more effective than re-reading notes. Why spacing out practice beats cramming. Why struggling to recall information strengthens memory better than looking up the answer immediately.

The struggle isn't a bug in the learning process. It's the feature. It's what forces your brain to strengthen connections, build new pathways, and consolidate information into long-term memory.

ChatGPT removes struggle. That's literally its value proposition, it makes things easier. But when we use it to bypass the cognitive effort of thinking through complex problems, we're also bypassing the mechanism that makes us better thinkers.

Think about it: When was the last time you really struggled to articulate a complex idea in writing? That frustration, that mental friction of trying to organize your thoughts, that's your brain doing the work that makes those neural connections stronger.

Neuroplasticity: The Double-Edged Sword

Your brain is constantly rewiring based on what you practice. This is neuroplasticity, and it's a double-edged sword.

The good news: If you've been over-relying on AI, your brain isn't permanently damaged. Cognitive skills are trainable. You can rebuild the habits and neural pathways that support deep thinking, strong memory encoding, and independent problem-solving.

The cautionary tale: "Use it or lose it" applies to cognitive skills just like physical skills. If you stop practicing the mental effort of writing, your brain will reallocate those resources. You won't forget how to write, but you'll get worse at the hard parts. Articulating complex thoughts will feel harder. Finding the right words will take longer. Organizing your ideas logically will require more effort.

This isn't because AI "rotted" your brain. It's because you stopped practicing those skills regularly, and your brain adapted to the new pattern.

The Critical Distinction: Partner vs. Replacement

Here's the framework that matters: Is AI your thought partner or your thought replacement?

Thought partner: You draft your ideas first. You think through the logic. You articulate your reasoning even if it's messy. Then you use AI to refine, expand, improve. You maintain cognitive ownership because the thinking originated with you.

Thought replacement: You describe what you need to AI. AI generates it. You copy-paste. Your brain was barely involved in the cognitive work. You have efficiency but not encoding.

The difference isn't about AI being "good" or "bad." It's about which cognitive skills you're practicing and which you're atrophying.

The takeaway: AI changes which cognitive skills you exercise. The question isn't whether to use AI, it's how to use it in ways that maintain the mental capabilities you don't want to lose.

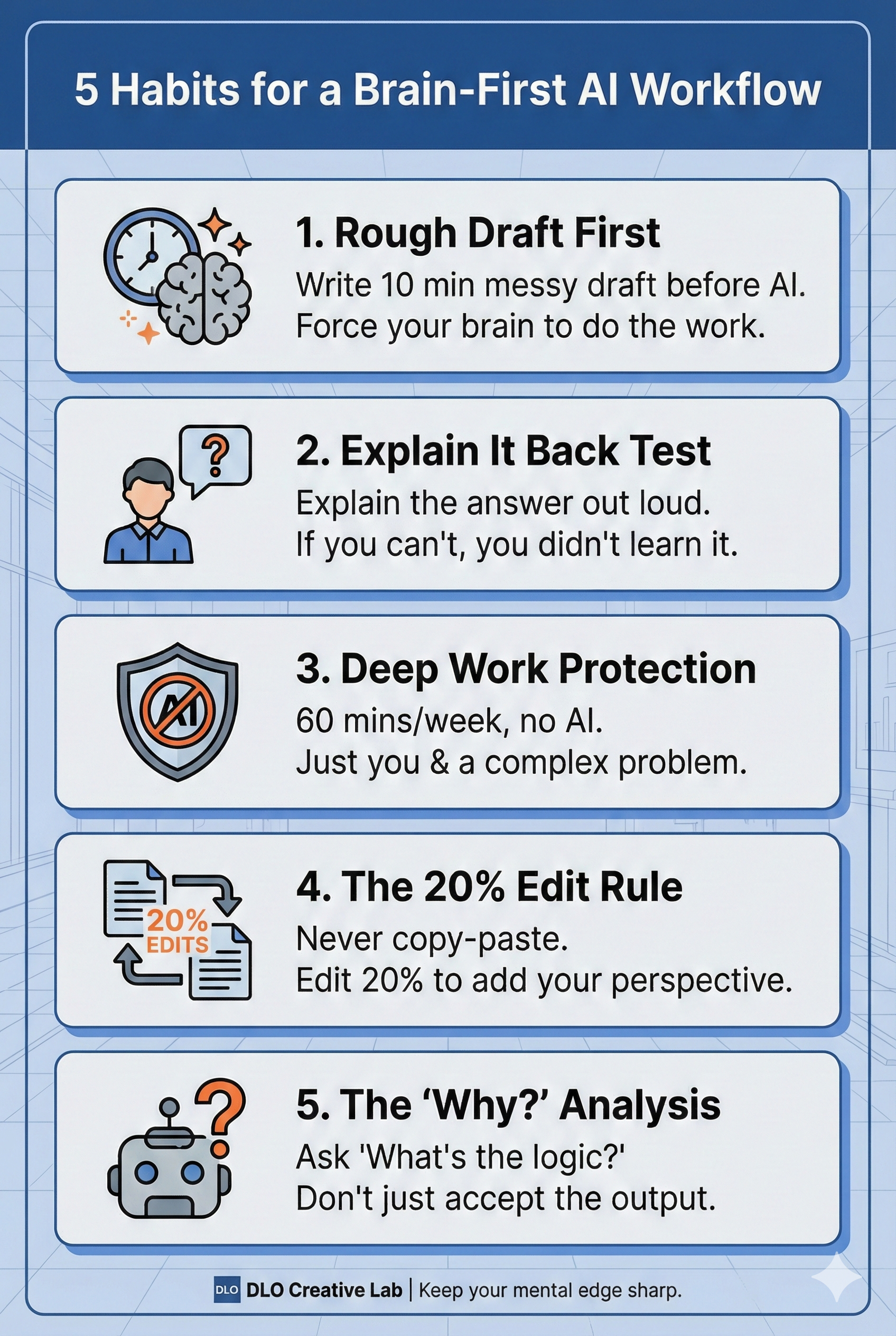

The Strategic Framework: Evidence-Informed Habits for Healthy AI Use

Alright, enough theory. Let's talk about what you actually do about this. These a're strategic approaches based on how cognition actually works.

Habit 1: The "Rough Draft First" Rule

Difficulty: Low | Impact: High

Principle it addresses: Generation effect + cognitive ownership

How it works: Before using ChatGPT for any writing task, spend 10 minutes creating your own messy first draft. Bullet points. Sentence fragments. Stream of consciousness. Whatever. THEN give that to ChatGPT to polish, expand, or reorganize.

Why this matters: You're building the neural pathways first (encoding the thinking), then using AI for refinement (efficiency gain). You maintain cognitive ownership because the ideas originated in your brain. Bonus: ChatGPT's output will be more specific to your actual thinking, not generic AI writing.

Implementation: Set a 10-minute timer. Open a blank document. Write badly. Give yourself permission for terrible first drafts, that's literally the point. After 10 minutes, then open ChatGPT.

Habit 2: The "Explain It Back" Test

Difficulty: Low | Impact: High

Principle it addresses: Retrieval strength + genuine understanding

How it works: After ChatGPT generates something for you, create a summary, an explanation, a research synthesis. Close ChatGPT and explain the key points in your own words to someone else (or just write it out for yourself).

Why this matters: If you can't explain it without looking, you didn't actually learn it. You consumed information, but you didn't encode it. This test reveals whether you used AI as a thought partner (you can explain it) or a thought replacement (you can't).

Implementation: Keep a "explain it back" doc or recording available. After using ChatGPT for research or learning, spend 5 minutes writing or recording a response: "Here's what I learned..." in your own words, without looking at ChatGPT's response. If you can't do it, you need to re-engage with the material.

Habit 3: The "Deep Work Block" Protection

Difficulty: Medium | Impact: Very High

Principle it addresses: Desirable difficulty + sustained cognitive effort

How it works: Designate one 60-90 minute block per week where ChatGPT is completely off-limits. During this time, work on something cognitively demanding like: writing a design rationale, solving a complex code compliance issue, thinking through a client problem. Just you and your brain.

Why this matters: You're deliberately practicing the mental effort of sustained, focused thinking without the escape hatch of AI assistance. This is where cognitive strength gets built, not in the easy tasks, but in the hard ones where you have to push through difficulty.

Implementation: Schedule this time in your calendar. Treat it like a client meeting, non-negotiable. Turn off ChatGPT (actually close the tab). Put your phone in another room. For 60-90 minutes, your brain does the heavy lifting.

Habit 4: The "20% Edit Rule " Requirement

Difficulty: Medium | Impact: Medium

Principle it addresses: Active processing + critical thinking

How it works: Never use ChatGPT output without editing it substantially. Aim for changing at least 20% of what it generates, not just tweaking words, but reorganizing logic, adding your perspective, removing generic phrases, inserting specific examples.

Why this matters: Editing forces you to critically evaluate the content, think about what's actually accurate or relevant, and engage cognitively with the material. It's the difference between passive consumption and active processing.

Implementation: Track this for a week. Paste ChatGPT's output into a doc, make your edits in track changes mode, then check the percentage. If you're only changing 10-15%, you're not engaging enough.

Habit 5: The "Why Did AI Suggest This?" Analysis

Difficulty: High | Impact: Medium

Principle it addresses: Critical thinking + understanding reasoning

How it works: When ChatGPT gives you a solution or recommendation, don't just accept it. Ask yourself (or ask ChatGPT): "Why is this the answer? What's the underlying logic? What assumptions is this based on?"

Why this matters: This habit keeps your critical thinking engaged. You're not just accepting AI output as truth, you're evaluating it, questioning it, understanding the reasoning behind it. This is what separates AI-assisted thinking from AI-replaced thinking.

Implementation: Add a step to your ChatGPT workflow: After you get an answer, write one sentence: "This works because..." or "The logic here is..." If you can't articulate why the solution makes sense, you haven't actually understood it, you've just copied an answer.

The takeaway: These habits aren't about avoiding AI. They're about using AI in ways that keep your cognitive skills sharp while still getting efficiency gains. Think of them as a workout routine for your brain in the age of AI assistance.

The Implementation Plan: Making This Actually Happen

The sustainability key: Don't try to implement all five habits at once. You'll burn out and quit. Instead, add one new habit every 2-3 weeks until you've built a stack that feels sustainable.

Track it simply: Use a basic habit tracker (even just checkmarks on a calendar). The goal isn't perfection, it's consistency. If you hit 70-80% adherence, you're winning.

The takeaway: Implementation is about building sustainable patterns, not achieving perfection. Start small, track honestly, adjust as needed.

The Reality Check: Myths vs. What We Actually Know

Let's end where we started, with a clear-eyed look at what's real and what's hype.

Myth vs. Reality

MYTH: "ChatGPT is rotting your brain and making you dumber."

REALITY: AI doesn't damage your brain. But overreliance on AI for cognitive tasks means you're not practicing certain mental skills, and like any skill you don't practice, they'll weaken over time. This is reversible through intentional habit change.

MYTH: "You need to quit AI completely to protect your cognitive health."

REALITY: The goal isn't AI abstinence, it's strategic AI partnership. The calculator didn't destroy mathematical ability for people who learned math first. GPS didn't destroy navigation for people who understand maps. AI won't destroy thinking for people who maintain strong cognitive habits.

MYTH: "We have definitive long-term studies proving AI hurts cognition."

REALITY: We don't. We have principles from cognitive psychology (generation effect, desirable difficulty, neuroplasticity) and analogies from other technologies (GPS, calculators, spell-check). But ChatGPT has only been widely used for about two years. Long-term cognitive effects? We're living through the experiment right now.

MYTH: "If I just use AI for boring tasks and do the important thinking myself, I'm fine."

REALITY: Partially true, but misleading. Even "boring" tasks involve cognitive skills, organization, articulation, logical sequencing. If you consistently outsource these, you're still weakening those muscles. The key is maintaining some practice across all cognitive skills, even the "boring" ones.

The Final Perspective

Here's what I've come to believe after wrestling with this question myself:

AI is a cognitive power tool. Power tools are dangerous when you don't know what you're building. They're transformative when you do.

The danger isn't AI itself—it's the pattern of reaching for AI as a first resort rather than a strategic partner. It's the gradual slide from "AI helps me work faster" to "I can't work effectively without AI."

But here's the thing: you control that pattern. You decide when to struggle through difficult thinking and when to offload. You choose whether to generate first or prompt first. You determine whether AI amplifies your capabilities or replaces them.

Your brain is adaptive. It will shape itself around whatever you practice. If you practice outsourcing thinking, you'll get better at outsourcing and worse at independent thought. If you practice using AI strategically while maintaining strong cognitive habits, you'll get better at both thinking AND leveraging AI effectively.

The tools don't determine the outcome. Your habits do.

So here's my challenge to you: You don't need to fear AI. You need to be intentional about how you use it.

Your First Step: Start Tomorrow Morning

Don't overthink this. Here's what you do tomorrow:

Tomorrow morning, pick ONE task where you'd normally go straight to ChatGPT. A project description. An email. A summary. Whatever.

Instead: Set a timer for 10 minutes. Open a blank document. Write your rough draft first. Messy is fine. Incomplete is fine. Just generate the thinking yourself before you hand it to AI.

After 10 minutes: Now use ChatGPT. Give it your rough draft. Ask it to refine, expand, improve. Edit its output significantly.

At the end: Notice how you feel. Do you remember the content better? Do you feel more ownership over the final product? Does the ChatGPT output feel more specific to your actual thinking?

That's it. That's your first step.

Not a complete AI detox. Not seven new habits at once. Just one 10-minute practice of generating first, then leveraging AI second.

Do that tomorrow. Do it again the next day. And the day after that.

Because the question isn't whether AI will change how we think. It already has.

The question is whether we'll let it change us by default or whether we'll be intentional about who we become in partnership with these tools.

Your brain is plastic. Your habits are changeable. And the best time to start building healthier AI patterns was yesterday.

The second-best time is tomorrow morning.